Post

-

Jenny’s Daily Drivers: Going 32-Bit With SliTaz In 2026

MondoJenny’s Daily Drivers: Going 32-Bit With SliTaz In 2026

We’re used to seeing technologies move with the times, and it’s likely among Hackaday readers are the group who spend the most time doing that and are most aware of it. There’s one which we’ll all be aware of which has quietly slipped away for most of us almost without a word, I speak of course of 32-bit computing. For most of us that means 32-bit computing on x86 machines, and since the 64-bit x86 instruction set we all now use has been around for nearly a quarter century, its 32-bit ancestor is now ancient history.

In the world of software that means we’re now in an era of operating systems and browsers dropping 32-bit support, so increasingly keeping a 32-bit machine up to date will become a challenge. That sounds like something just painful and difficult enough to subject to a Daily Drivers piece, so just how practical is it to use a 32-bit machine for my daily work in 2026?

2005 Just Gave Me A Computer

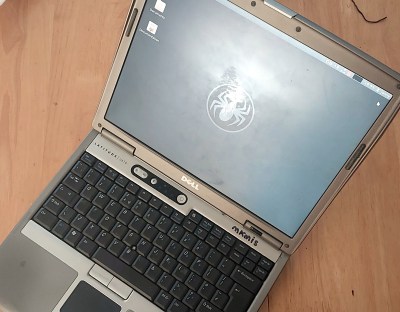

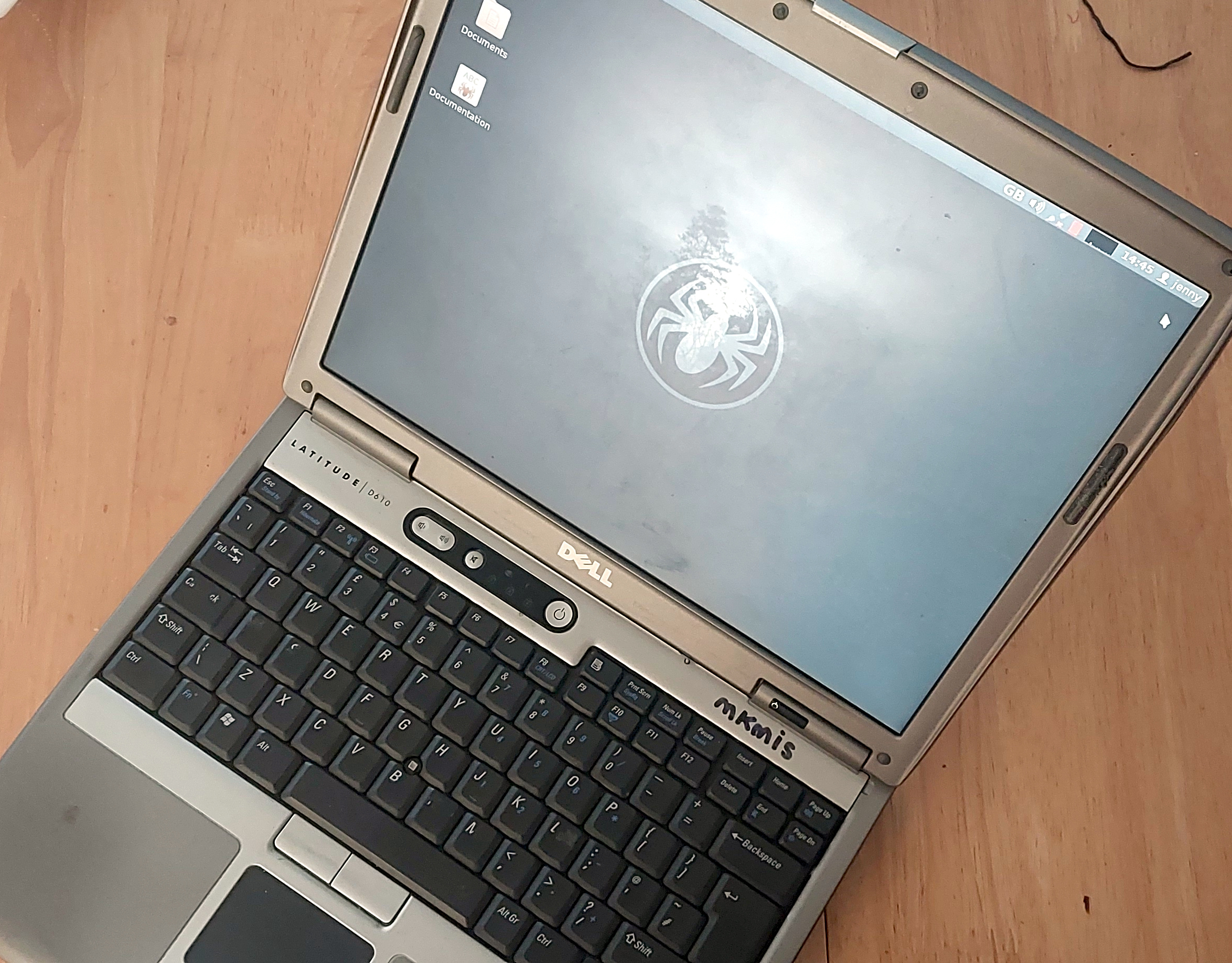

Not looking too bad for a 21 year old laptop.

On my desk I have a Dell Latitude D610. It was made in about 2005 in the days when Dells were solidly made, and with its 1.6GHz Pentium M and 2Gb of memory it represents roughly the final throw of the dice for a 32-bit Intel laptop. Just over a year later it would have been replaced by one of the Intel Core series with the 64-bit instructions grudgingly adopted from AMD, but at the time it was a respectably useful machine.It came into my possession about eight years ago when I used it to test the Revbank bar tab software for my hackerspace, and for the past six years it’s languished unloved in my box there. It’s got an ancient Ubuntu distro on it, so my first task is to pick a 32-bit replacement from 2026. That’s now a dwindling selection, so it’s time to start digging though some minimalist distros. With the supply of those based on mainstream distros drying up as they drop 32-bit support, it’s time to look into more esoteric offerings. This fits well with the ethos of this series, we’re all about the unusual here.

Cutting out the mainstream based distros certainly narrows the field, and out of the promising contenders in the minimalist field, I went for SliTaz. It uses Busybox and the Openbox desktop, that runs from RAM. I was looking for good application support in the repos, and this distro has the things I need. Download it, stick it on a USB stick, and let’s see what it can do. I know one thing, I wouldn’t have been able to download that ISO in five seconds with the internet connection I had in 2005.

SliTaz, A Tiny Distro That’s Really Useful

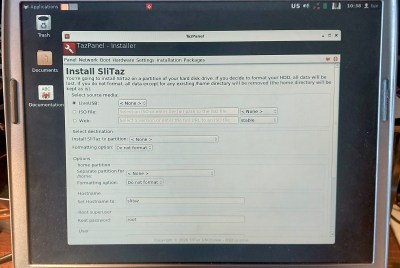

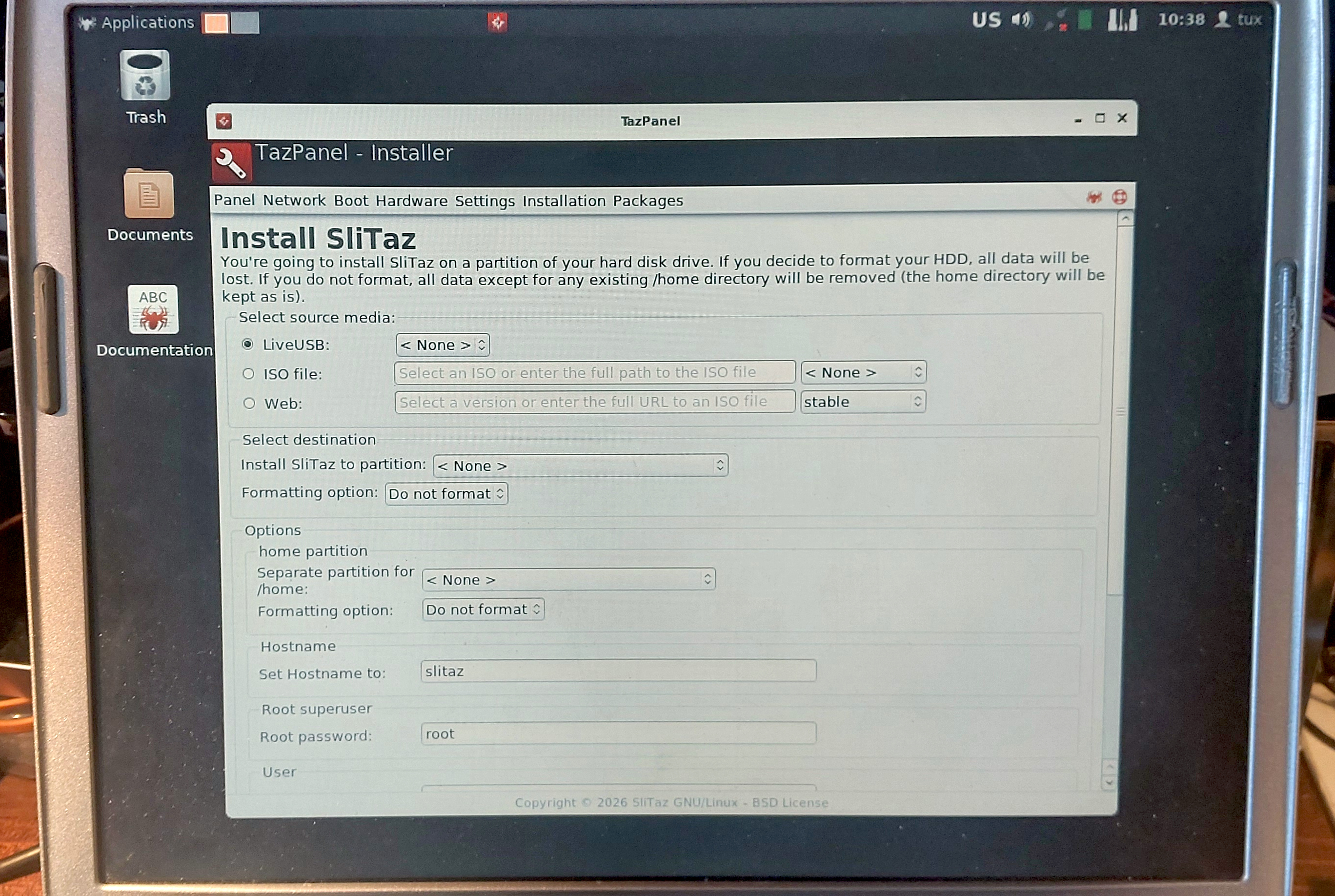

With a few of the type of quirks you’ll always encounter with a new distro, the SliTaz instllation process was pretty painless. It required me to use Gparted to partition the spinning rust on the Dell, but otherwise the installation was mostly a case of filling in standard responses you’d find on any distro. Then it’s into the Openbox desktop environment. This thing is fast!

Installation is straightforward.

Graphical system administration is done through the Tazpanel application that as far as I can see uses the web browser, which soon had me connected to the internet and downloading GIMP so I could do my Hackaday work. The package library is comprehensive, which is pleasing to see. The default web browser is called Tazweb, which is modern enough to render most the sites I normally use, but which for some reason didn’t like Hackaday’s WYSIWYG editor so I was left writing in HTML source. It is quick though on this older hardware, something brought home to me when I downloaded Pale Moon. That browser is usable, but noticeably slow.This was the first time using SliTaz for me, and I have to say I’m impressed. It’s small and fast compared to other full-fat distros I’ve used on machines of this age, and quirks aside, it’s easy to use and seems well supported. I’ve written most of this piece on it, and unlike some of the previous operating systems in this series, that has not been a painful experience. It has made the Dell into a useful machine again, one which while it’s no powerhouse, is at least no longer a piece of e-waste. There’s also a 64-bit version, making it a good choice for newer old hardware too. (The Raspberry Pi 1 port looks particularly interesting.)

Should You Though, Really?

It does all the important stuff.

So what have I proved here? A 21 year old 32 bit machine is a bit slow but still usable here in 2026 with the right software, which is to my mind a testament to the skill and dedication of open source developers and maintainers for keeping this ancient architecture alive. Researching this piece though it’s very obvious that much of the software necessary for modern computing is slipping out of 32 bit support, so I have to question how much longer they can keep it up. Considering that this machine has about the same intrinsic monetary value as a Core2-based machine made a little over a year later which supports 64 bit code I have to concede that what I’ve just done is a fairly pointless exercise. It’s necessary to keep old hardware usable as long as possible, but when it’s lasted over two decades as this one has them maybe we should concede that it’s time to move on. Find a 64-bit laptop from 2007, by all means install 64-bit SliTaz if you want a quick and small distro, and move forward with many more years of software support.In a way the real star of this piece is the Dell itself. It was a corporate laptop, then as far as I know it was used by the Men In Sheds that shares the building with MK Makerspace, and when they tossed it I nabbed it to play with RevBank. Saved by a piece of Dutch open source software it’s sat unloved for years, and yet it’s still reliable, its battery still holds almost useful charge, its keyboard is robust if a little worn, and its joints are still tight. It’s a shame the architecture is sliding out of relevance, this is almost a useful laptop!

hackaday.com/2026/04/30/jennys…

-

A Tractor From A Small Town Might Just Be The Catalyst For Ousting Machinery DRM

MondoA Tractor From A Small Town Might Just Be The Catalyst For Ousting Machinery DRM

Odd things sometimes pop up in the feed of a Hackaday scribe, not hacks as such, but stories with a meaning in our community. One such that’s come our way from a variety of sources over the last week features Ursa Ag, a small machinery manufacturer based in Alberta, Canada. The reason they’re in the news is because they have gained bulging order books by taking on the likes of John Deere with a tractor more like the one their customers’ parents bought back in the ’80s or ’90s. It’s a basic machine without much in the way of electronics, and certainly without all the DRM lockdown that has made those big manufacturers so unpopular.

It’s clear that Hackaday isn’t in the business of shilling Canadian tractors, but it should be of interest to readers because it represents an alternative route to challenge the DRM lockdowns than the legal and consumer routes we’ve previously reported on. The Ursa Ag tractor may be as niche Albertan as a Corb Lund CD, but it’s not the tractor itself but the idea which matters. We doubt much sweat will be shed by John Deere execs over a tiny company out on the prairies making a basic spec tractor, but given that Ursa Ag customers are reported as buying them because they have no DRM, the prospect of larger upstart competitors taking note and offering machines without it may cause them some sleep loss. The free market is held up to outsiders as perhaps the most American of ideals, and for it to eventually prove to be the means by which something intended to limit it might be defeated, is sweet justice indeed.

We’ve reported extensively on the Deere tractor saga over the years, but perhaps the best illustration of the self-inflicted damage the brand has suffered through DRM comes in their older products being worth considerably more than their newer ones.

hackaday.com/2026/04/30/a-trac…

-

How TTY Opened Up The Phones For The Hard of Hearing

MondoHow TTY Opened Up The Phones For The Hard of Hearing

The telephone was an invention that revolutionized human communication. No more did you have to physically courier a letter from one place to another, or send a telegram, or have a runner carry the message for you. Instead, you could have a direct conversation with another person a great distance away. All well and good if you can speak and hear, of course, but rather useless if you happen to be deaf.

Those hard of hearing were not left entirely out of the communication revolution, however. Well before IP switched networks and the Internet became a thing, there was already a way for the deaf to communicate over the plain old telephone network—thanks to the teletypewriter!

Over The Wires

The teletypewriter (TTY) has been around for a long time. The first device came into being in 1964, developed by James C. Marsters and Robert Weitbrecht, both deaf. Their idea was to create a method for deaf individuals to communicate over the phone network in a textual manner. To this end, the group sourced teleprinters formerly used by the US Department of Defense, and hooked them up with acoustic couplers that would allow them to mate with the then-ubiquitous AT&T Model 500 telephone. Thus, the TTY was born. A user could dial another TTY machine, and key in a message, which would print out at the other end. The receiving user could then respond in turn in the same manner.A Miniprint 425 TDD device. Note the acoustic coupler on top, the VFD for displaying messages, the printer, and the SK and GA keys which automatically key in these regularly-used abbreviations. Credit: public domain

The early machine used simple frequency-shift keying to encode the characters of the alphabet and some basic control codes, allowing text messages to be sent back and forth via a regular analog telephone call. In the US, where the devices eventually became known as telecommunications device for the deaf (TDDs), the devices used an improved development of Baudot code (the USA-TTY variant of ITA-2) to send signals over the phone lines.This involved representing characters with five bits, which was enough to cover the 26 characters of the English alphabet, plus 0-9 and a few control codes. Transmission rates were slow—typically just 45.5 to 50 baud. With a 5-bit code, this limited transmission to approximately 10 characters per second.

The sign on the left indicates a payphone with a TTY device attached. These were rare installs back in the landline era, and vanishingly few remain today. Credit: CC BY-SA 4.0

TTYs quickly caught on as a useful device for the deaf and hard of hearing, and developed its own norms similar to other textual telecommunications methods that came before. Users would key “GA” for “go ahead,” to indicate the other party could “speak” on the half-duplex link, as two users typing at the same time would lead to garbled messages. “SK” stood for “stop keying” to indicate the ending of a call. Abbreviations were common to save effort, such as “CU” (see you) and “TMW” (tomorrow).Relay Service

At its heart, the TTY was a very useful device for allowing its users to communicate via textual means to others with compatible hardware. However, alone, a TTY could not allow a deaf user to communicate effectively with regular telephone users. To enable greater accessibility, many organizations developed telecommunications relay services.

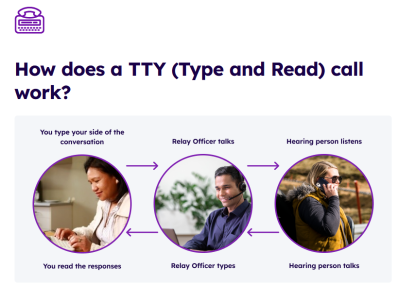

TTY machines led to the establishment of relay services that allowed deaf users to make regular phone calls with assistance from an operator. Credit: screenshot, Australian National Relay Service

These first existed as a number that deaf TTY users could call in order to connect to a human operator with their own TTY machine. This operator would place calls on behalf of the deaf individual, speaking on their behalf to other parties based on the deaf user’s inputs to their TTY device. In turn, the operator would key out the responses from the called party so the deaf individual could read back the conversation.The first relay service was established by Converse Communications in Connecticut in 1974. The concept was quickly picked up by many other telecommunications operators around the world to provide an accessibility aid to those who needed it. These days, relay services still exist, though a great many relay services now operate over IP-based systems rather than via phone lines and TTY devices.

Hanging On

TTY still exists to some degree out in the world today. There are still subscribers with analog phone lines, and the basic TTY technology still fundamentally works over these links. However, the rise of SMS text messaging and widespread Internet connectivity have somewhat negated a lot of use cases for TTY technology these days. There have also been cases where digital upgrades to the phone network have made TTY operation more difficult, though some efforts have been made to ensure compatibility in some networks, particularly for emergency uses.Ultimately, TTY was a technology that brought telecommunications access to a greater number of people than ever before. Like the landline phone and the fax machine, it’s no longer such a feature of modern life. However, it was an important link to the world for many in the deaf and hard of hearing community, and was greatly valued for the connection and accessibility it provided.

hackaday.com/2026/04/30/how-tt…

-

Transcribing the Source of the First DOS for the IBM PC

MondoTranscribing the Source of the First DOS for the IBM PC

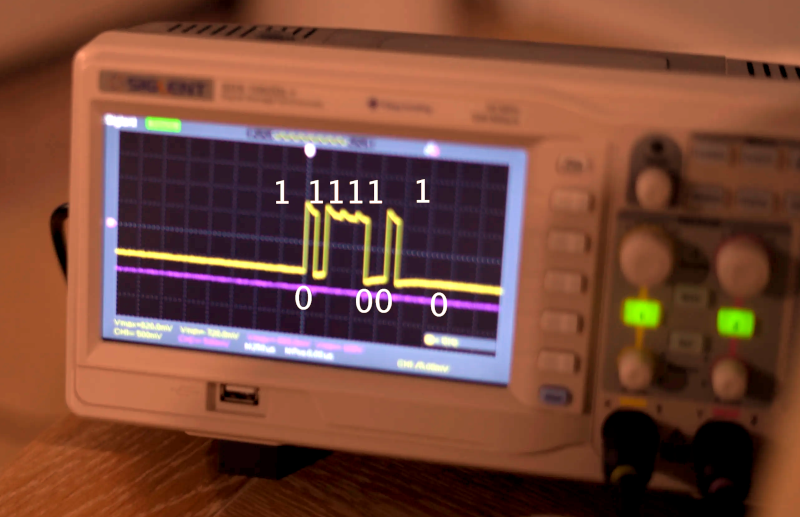

Doing software archaeology can be a harrowing task, as rarely do you find complete snapshots of particular versions of software. Case in point the development of MS-DOS – also known as IBM PC DOS – from 86-DOS, which recently got a lucky break in the form of printed source listings. These printouts come courtesy of [Tim Paterson], the creator of 86-DOS and of MS-DOS during his time working for Microsoft.

These code listings contain the sources of the 86-DOS 1.00 kernel, multiple development snapshots, and also listings for utilities like CHKDSK. These printed listings additionally contain many handwritten notes, making transcribing it into working source code somewhat of a chore. The results can be found on the GitHub project page, with the original scans available on Archive.org.

Of the ten bundles of continuous feed paper prints all but two have been transcribed so far, though with the various DOS kernels and the Seattle Computer Products (SCP) assembler source already ready for compilation. This includes 86-DOS 1.00, MS-DOS 1.25 and PC-DOS 1.00-dev, requiring the same SCP assembler to create a binary.

In the project page README a number of blog posts are also linked that add even more technical detail. Anyone who wants to pitch in with transcribing and/or testing recovered source code is welcome to do so.

hackaday.com/2026/04/30/transc…

-

Gli attacchi cyber si stanno spostando sull’edge

MondoGli attacchi cyber si stanno spostando sull’edge

@informatica

Il report di TrendAI accende i riflettori sulle nuove tecniche adottate dagli hacker di stato. I gruppi APT guidano il cambiamento sfruttando sempre più spesso le vulnerabilità di dispositivi edge e l’adozione dell’AI rischia di peggiorare la situazione

L'articolo Gli attacchi cyber si stanno spostando sull’edge -

Building an x86 Gaming PC Without Intel, NVIDIA or AMD parts

MondoBuilding an x86 Gaming PC Without Intel, NVIDIA or AMD parts

This is an interesting challenge from the “why not?” files — [GPUSpecs] over on YouTube built a gaming PC without using a single component from NVIDIA, Intel, or AMD. That immediately makes us think of the high-power ARM workstations or perhaps even perhaps the new “AI workstations” coming available with RISC V architecture, but the challenge here was specifically “gaming PC,” not workstation. A gaming PC, without a GPU by one of those three? To make it even more interesting, the x86 CPU isn’t Intel or AMD either.

If you’re of a certain vintage, you may remember Cyrix. Cyrix reverse-engineered the x86 ISA and made their own compatible chips in the 90s, before being bought out by National Semiconductor, and then VIA Technologies. VIA partnered with the Government of Shanghai to found Zhaoxin, and it is from Zhaoxin that the KaiXian KX 7000 CPU hails — an x86-64 device, that isn’t Intel or AMD. We’ve actually covered the company before. This particular chip benchmarks like an old i5, so not spectacular, but usable.

The GPU is also Chinese: a Moore Threads MTT S80, with 16 GB of DDR6 vRAM, 4096 shading units, 256 texture mapping units, and 256 ROPs. On paper, that looks like a very respectable graphics card, but it’s not clear how well the games [GPUSpecs] tested were actually using it. Based on the numbers he was getting in his testing, there are some serious driver issues with this card. Even Black Myth: Wukong, which is supposed to be a game the card targets, was sitting at 13.6 FPS on low settings and 1080p. That almost feels like integrated graphics numbers, not something a beefy GPU would give you — but it matches what other reviewers were saying when the card first came out.

So if you’re looking for a sanction-proof gaming rig, we’re sorry to say it’s not quite ready for triple-A. On the other hand, it’s a neat hack and we didn’t know this box could even get built. Right now, it looks like you will need at least one of the big three names to game on–you can game on ARM with NVIDIA graphics, or even with Intel graphics, and of course AMD, which has been in the works the longest.

youtube.com/embed/rmxIjPCAp6w?…

hackaday.com/2026/04/30/buildi…

-

DK 10x29 - Un tiro di dati

MondoDK 10x29 - Un tiro di dati

Che differenza c'è fra un "agente intelligente" che cancella il tuo lavoro e un modello linguistico che ti racconta la vera storia degli orsi nello spazio?

-

Network Scanner Finds Every Raspberry Pi

MondoNetwork Scanner Finds Every Raspberry Pi

DHCP is great for getting machines on the network with a minimum of fuss. However, it can also make remote administration a pain because you never know which IP you’re supposed to be SSHing into. [Philipp] ran into this problem quite often, so decided to whip up an app to make things easier.

At it’s heart, the app is a simple network scanner—of which many already exist. However, [Philipp] had found that many options on Android were peppered with ads that made them highly undesirable to use. Thus, he whipped up his own, with a particular eye to working with the Raspberry Pi. It’s not uncommon for a hacker to have a few scattered around the home network, and it can be a real chore keeping track of where they all end up in IP land. The scanner can specifically single out the Raspberry Pi boards on the network via MAC-OUI and mDNS detection. Plus, just in case you need it, [Philipp] threw in some GPIO pinouts and electronics calculators just to make the app more useful.

If you’ve been looking for an open-source network scanner without all the ugly junk, this project might just be for you. You can also check out the source over on Github if that’s relevant to your interests. We’ve seen some interesting custom network scanners before, too. If you’re whipping up some fun packet-flinging software of your own, don’t hesitate to notify the tipsline!

hackaday.com/2026/04/29/networ…

-

Why Model Collapse in LLMs is Inevitable With Self-Learning

MondoWhy Model Collapse in LLMs is Inevitable With Self-Learning

There is a persistent belief in the ‘AI’ community that large language models (LLMs) have the ability to learn and self-improve by tweaking the weights in their vector space. Although there’s scant evidence that tweaking a probability vector space is anything like the learning process in biological brains, we nevertheless get sold the idea that artificial general intelligence (AGI) is just around the corner if we do just enough tweaking.

Instead of emerging super intelligence, the most likely outcome is what is called model collapse, with a recent paper by [Hector Zenil] going over the details on why self-training/learning in LLMs and similar systems is a fool’s errand. For those who just want the brief summary with all the memes, [Metin] wrote a blog post covering the basics.

In the end an LLM as well as a diffusion model (DM) is a statistical model of input data using which a statistically likely output can be generated (inferred) based on an input query. It follows intuitively that by using said output to adjust the model with, the model will over time converge on a kind of statistical singularity rather than some ‘AI singularity’ event. This is also why these models need to be constantly trained with external, human-generated data in order to prevent such a collapse.

In the paper by [Hector] a mathematical model is created to demonstrate that an LLM, DM or similar statistical model undergoes degenerative dynamics whenever said external input is reduced. Although in the paper a mechanism is suggested to counter the entropy decay within the model, the ultimate point is that a statistical model cannot improve itself without continuous external anchoring.

The idea of LLMs being at all intelligent in any sense has been a contentious one, with the concept of language models being equated with ‘AI’ dating back to the 20th century, including as fun home computer projects. Much of the problem probably lies in humans projecting intelligent behavior onto these statistical models, turning LLMs into ‘counterfeit humans’, not helped by how closely generated text can resemble something written by a human, even if completely confabulated.

Thanks to [deshipu] for the tip.

hackaday.com/2026/04/29/why-mo…

-

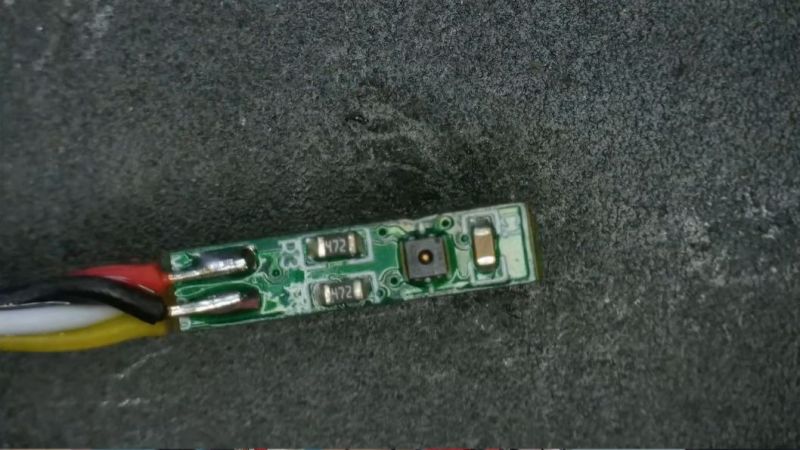

How to Kill Humidity Sensors With Humidity

MondoHow to Kill Humidity Sensors With Humidity

An often overlooked section in the datasheets for popular humidity sensors like the BME280 and DHT22 is the ‘non-condensing humidity’ bit, which puts an important constraint on which environments you can use this sensor in. This was the painful lesson that [Mellow Labs] recently had to learn when multiple of such sensors had kicked the bucket after being used in a nicely steamed-up bathroom. Fortunately, it introduced him to sensors that are rated for use in condensing humidity environments, such as the SHT40 that’s demonstrated in the video.

This particular sensor is made by Sensirion, and as we can see in the datasheet it features a built-in heater that allows it to keep working even in a condensing environment. This heater has three heating levels which are controlled via the I2C interface, though duration is limited to one second in order to prevent overheating the sensor.

Of note is that you cannot take measurements while the heater is operating, and its use obviously increases power draw significantly. This then mostly leaves when to turn on the heater as an exercise to the engineer, with [Mellow Labs] opting to start the heater when relative humidity hit 70% as a conservative choice.

In the comments to the video other options for suitable sensors were pitched, including the Bosch BME690 which is similarly rated for condensing environments. All of which condenses down to the importance of reading the datasheet for any part that you intend to use in possibly demanding environments.

youtube.com/embed/z-O44-vogfE?…

hackaday.com/2026/04/29/how-to…

-

Using a VT-100 Today

MondoUsing a VT-100 Today

You may not know what a ADM-3, a TV910, or a H1420 are, but you probably have at least heard of a VT-100. They are all terminals from around the same time, but the DEC VT-100 is the terminal that practically everything today at least somewhat emulates. Even though a real VT-100 is rare, since it defined what have become ANSI escape sequences, most computers you’ve used in the last few decades speak some variation of the VT-100’s language. [Nikhil] wanted to see if you could use a VT-100 for real work today.

While the VT-100 wasn’t a general-purpose computer, it did have an 8080 inside. It only had about 3K of RAM, which was enough to act as a serial terminal. A USB serial port and a terminal with modern Linux, how hard could it be?

As it turns out there were a few issues. MacOS assumes terminals can take data at 9600 baud with no handshaking, apparently. It also means that any application that assumes redrawing the whole terminal is fast will be sorry for that choice.

Of course, there are commands modern VT-100-like terminals accept that the original didn’t. However, as you’ll see in the post, all of these things you can either live with or solve.

It is easy to make your own VT-100 replica. While the VT-100 may seem simple today, it was a marvel compared to even older terminals.

hackaday.com/2026/04/29/using-…

-

FLOSS Weekly Episode 869: Linux on Your Toaster

MondoFLOSS Weekly Episode 869: Linux on Your Toaster

This week Jonathan chats with Andrei, Mahir, and Praneeth, live on location at Texas Instruments! The team at TI has been working hard to provide really good Open Source support for Sitara processors, including upstreaming support to the mainline Linux kernel. We talk about the CI pipeline for these devices, the challenges of doing Open Source at a big company, and more. Check it out!

youtube.com/embed/diTClE65Ag0?…

Did you know you can watch the live recording of the show right on our YouTube Channel? Have someone you’d like us to interview? Let us know, or have the guest contact us! Take a look at the schedule here.

play.libsyn.com/embed/episode/…

Direct Download in DRM-free MP3.

If you’d rather read along, here’s the transcript for this week’s episode.

Places to follow the FLOSS Weekly Podcast:

Theme music: “Newer Wave” Kevin MacLeod (incompetech.com)

Licensed under Creative Commons: By Attribution 4.0 License

-

BlueNoroff e le riunioni Zoom fasulle: come la Corea del Nord usa l’IA e i deepfake per svuotare i portafogli crypto dei CEO

MondoBlueNoroff e le riunioni Zoom fasulle: come la Corea del Nord usa l’IA e i deepfake per svuotare i portafogli crypto dei CEO

@informatica

Il gruppo nordcoreano BlueNoroff ha perfezionato un attacco multi-stadio che combina deepfake generati con ChatGPT, finte videochiamate Zoom e tecniche ClickFix per

RE: insicurezzadigitale.com/?p=855… -

Bicycle Tubes Aren’t Just Made Of Rubber Anymore

MondoBicycle Tubes Aren’t Just Made Of Rubber Anymore

For the average rider, inner tubes have been one of the most enduring and unchanging parts of bicycle design over the decades. They’re made of rubber, they have a Schrader or Presta valve, and they generally do an okay job at cushioning the ride.

However, if you’re an above-average rider, or just obsessive about your gear, you might consider butyl rubber tubes rather old hat. Today, there are far fancier—and more expensive—options on the market if you’re looking to squeeze every drip of performance out of your bike.

A Series Of Tubes

Butyl rubber inner tubes have a lot of things going for them, which is why they’ve been the standard forever. Rubber holds air well, and is easy enough to repair in the event of a puncture. It’s also cheap. However, there are some ways in which the butyl inner tube holds a bicycle back. A thick rubber tube isn’t exactly light; even in a road bicycle application, a single tube can weigh 100 grams or more. They also add to the rolling resistance of a wheel and tire combination. In these regards, other materials have the potential to offer greater performance.Latex

Latex inner tubes tend to be the lightest available, with the lowest rolling resistance. However, they’re somewhat delicate and don’t always play well with rim brake setups. Credit: via Amazon

Latex is a material with many familiar uses, but it’s also recently become a popular alternative material for making inner tubes. It has the benefit of being very light, with a typical road bike latex tube saving 50 grams or more compared to the butyl rubber equivalent. The more flexible material also reduces rolling resistance by several watts at higher speeds, something which can make a real difference under competitive racing conditions. In a more qualitative sense, many riders also prefer the feel of riding on lighter latex tubes.However, latex tubes also come with drawbacks. The ultra-thin, lightweight material can be susceptive to sudden failure from excessive heat, which can risk a crash in the worst cases. For this reason, the lightest latex tubes are often recommended for use on disc brake bikes only, due to the high temperatures that can be generated by rim brakes on a long descent. Latex tubes also lose air relatively quickly, and thus it’s recommended to pump up latex tubes to the required pressure ahead of every ride. They’re also difficult, but not impossible, to patch, and require some care to avoid damaging their thin walls during installation.

TPU

A Continental butyl rubber tube, pictured next to a pink TPU tube for comparison. note how much less space the TPU tube takes up. Credit: MaligneRange, CC BY SA 4.0

You might be familiar with thermoplastic polyurethane (TPU) for its use as a flexible 3D printing filament. As it turns out, it’s also a viable material for producing bicycle inner tubes. TPU tubes shave off weight and rolling resistance compared to butyl rubber, albeit not quite as much as the finest latex tubes out there. They do, however, hold air a lot better than latex, reducing the need to reset tyre pressure before each ride. Ride quality is also generally considered better than rolling on traditional butyl rubber tubes. TPU tubes also fold up incredibly small—a largely meaningless benefit in use, but really helpful if you’re trying to pack a spare or three to take on a ride.

A TPU tube from Schwalbe. These tubes are known for being exceptionally thin and flexible, reducing weight and rolling resistance. Credit: Glory Cycles, CC BY 2.0

Unfortunately, TPU tubes can be quite expensive to procure—often double the price of latex and three or four times that of a butyl rubber tube. The thinnest versions can similarly be at risk of heat failures when used with rim brakes, so it’s important to check before installation if your TPU tubes are rated for use with disc brakes only. Puncture repair can also be difficult, though there are some specialist patches on the market if you wish to attempt it.Roll, Roll, Roll

It’s worth noting that there is another way to go, as well. It’s possible to buy wheel and tire setups that eliminate inner tubes entirely. These “tubeless” systems offer a major weight reduction, and tend to have lower rolling resistance than even the lightest, most flexible tube setups out there. They’re not really a development of tube technology, but moreso a divergence in wheel and tyre design. In any case, they are pricy, and can require some special equipment to install and maintain. To allow them to self-heal in the event of minor punctures, they’re also typically filled with sealant. In the event of more serious damage, it’s often still possible to install a tube to keep riding, but this is an incredibly messy process that will get sealant all over you.If you’re a regular commuter cyclist, butyl rubber tubes will probably remain your go-to choice. They’re the cheapest to buy, the easiest to repair, and any benefits from lighter, more efficient tubes are largely wasted on a commute. However, if you’re an avid road cyclist looking for the best feel and efficiency, or especially if you’re getting serious about racing, then you really ought to consider leaving butyl behind for something better. Happy cycling!

hackaday.com/2026/04/29/bicycl…

-

Digital Signal Processing on the Pi Pico

MondoDigital Signal Processing on the Pi Pico

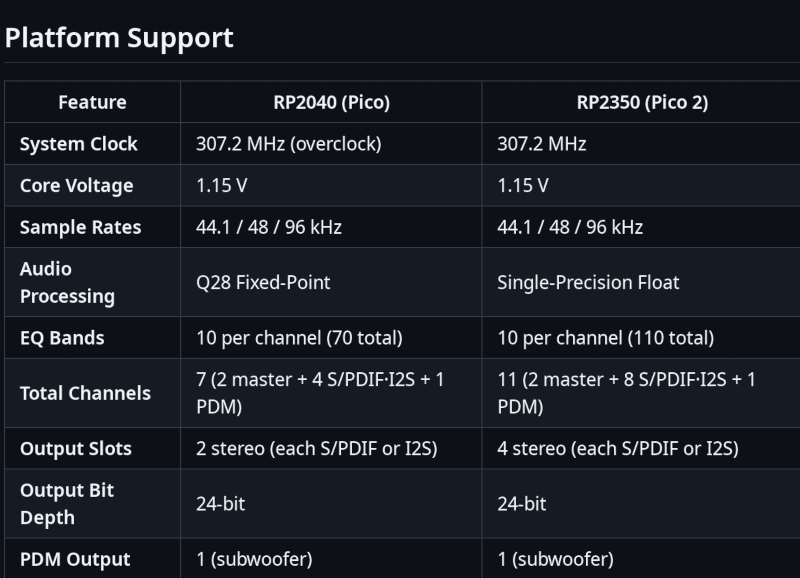

If you want to dabble in audio digital signal processing, you would probably think of grabbing a dedicated DSP chip. But thanks to [WeebLabs], you could just pick up a Pi Pico and use this full-featured DSP library.

The system supports plug-and-play USB audio interface that enumerates on Windows, Linux, macOS, and iOS. It can handle 16- or 24-bit inputs at up to 96 kHz. You can output up to four channels of 24-bit S/PDIF or I2S, or switch to an RP2350 to get eight channels. This lets you drive a DAC easily. There is also a direct output for a subwoofer that doesn’t require a DAC.

Each channel has a pre-amp, and a matrix mixer allows routing with different gains and phases for each input. An equalizer allows ten bands per channel. There are also modules to do volume leveling, loudness compensation, and headphone cross-feed.

The library uses both cores of the CPU and manages up to ten preset configurations. The Pico does get an overclock and uses a fixed-point representation. The Pico 2 (RP2350) doesn’t need overclocking and uses single-precision floating point.

Overall, this looks like a great base for any sort of soundcard-like project. We’ve seen DSP stunts on the Pico before. This might also make a nice base for other audio projects.

hackaday.com/2026/04/29/digita…

-

Ask Hackaday: Do You Need a Tablet?

MondoAsk Hackaday: Do You Need a Tablet?

There’s an old saying that the happiest days of a boat owner’s life are the day they buy the boat, and the day they sell it. For me, the happiest days of an Android tablet owner’s life are the day they buy a new one, and the day they buy a newer one. For some reason, I always buy tablets with great expectations, get them set up, and then promptly lose them in a pile on my desk, not to be seen again. Then a shiny new tablet gets my attention in a year or so, and the cycle repeats.

You might be thinking that I just buy cheap junk tablets. It is true that I have. But I have also bought new Galaxy and Asus tablets with the same result. Admittedly, I have owned several Surface Laptops and Pros, and I do use them. But I can’t remember the last time I have used one without the keyboard. They aren’t really tablets — they are just laptops that can also be heavy, awkward tablets.

Why?

I get the sense that iPad users get more use from their devices, but I’m not sure why. Maybe because Android tablets are really just blown-up phones. These days, my phone is big enough for most things. Sure, the tablet is bigger, but it isn’t that much bigger. In addition, my phone usually has a much better CPU, camera, and everything else. Not to mention it is constantly connected to the Internet, even if I’m not in range of a known WiFi router.Read webpages? Phone. Play games? Phone. Deal with e-mail? Phone. The only advantage is if I put the tablet’s cheap Bluetooth keyboard on and use it like a laptop. But wait, I can just as well do that with the phone. Plus, voice typing for things like e-mails and messages is much better than it used to be.

Then there’s using it as a laptop replacement. When my laptop weighed a ton and got a few hours on a battery, that seemed like a good idea. But modern laptops don’t weigh that much, and they have pretty reasonable battery life, too. I always install some kind of Linux, like Termux and even Termux-X11, so I can use it as a lightweight Linux laptop. And I still don’t use it. (My setup is similar to the one in the video below, although you may have a few hiccups getting it all to work.)

youtube.com/embed/QCr4WWsfVv8?…

Desktop

Phone, tablet, or laptop, I’m still more likely to be found at my desk behind a big screen with a serious computer. Maybe it’s a generation gap, like clinging to a landline phone (I don’t) or a DVD player (another thing I don’t do). Maybe it is that most of the things I do on the computer benefit from large split screens and fast computing times.Of course, there’s also the gadget factor. My desktop computer is huge and heavy, full of cards and water coolers, disk drives and fans. Some people trick out their cars. It is hard to expand most laptops, phones, and tablets, although I have had some success taking them apart for simple upgrades. They never seem to go back together quite right, though.

So Then?

So then what do I actually want a tablet for? I don’t know. Which leads me to ask you: what are you using a tablet for? Do you really use it regularly? Or is it another gadget collecting dust? It doesn’t count if you repurpose them for some dedicated use: a second screen, a touchpad, or a 3D printer controller. I mean using them as a replacement for your normal computing platform. Let us know in the comments.Maybe I’d be happier making my own tablet.

hackaday.com/2026/04/29/ask-ha…

-

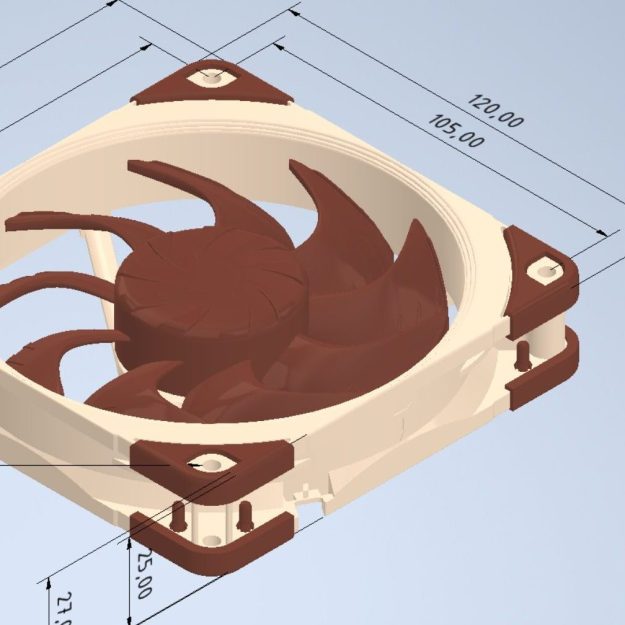

Noctua Releases 3D Models, But Please Don’t Try To Dupe The Products

MondoNoctua Releases 3D Models, But Please Don’t Try To Dupe The Products

Noctua wants to make life easier for fans of its…fans. To that end, the company has released a bevy of 3D models across its various product lines, all available to download for free.

If you’re not familiar with the company, Noctua specializes in high-quality cooling systems for the PC market. Its hope is that by freely providing 3D models of its components, it will aid aftermarket companies and DIYers that wish to integrate Noctua fans into their gear. In the company’s own words, these files are made available for “mechanical design, rendering, or animations.” They will let people check things like mountings and fitment without having to have the parts on hand, or to create demo visuals featuring the company’s products.

Don’t get too excited, though, because Noctua has already thought ahead. The company has specifically noted these parts aren’t intended for 3D printing, and critical components like fan blades have modified geometry so as to not compromise the companies IP. You could try and print these models, but they won’t perform like the real thing, and Noctua notes they shouldn’t be used for simulation purposes either. They’re intentionally not accurate to what the company actually sells in that regard.

That isn’t to say Noctua is totally against 3D printing. They have lots of parts available on Printables that they’d love you to try—everything from fan grilles to ducts to anti-vibration pads. Most are useful accessories—the kind of little bits of plastic that make using the products easier—that don’t threaten Noctua’s core product line in the marketplace.

If you’re whipping up a custom PC case and you want to kit it out with Noctua goodies, these models might help you refine your design. It’s funny how it’s such an opposite tactic to that taken by Honda, in terms of embracing the free exchange of 3D models on the open Internet. It’s a move that will surely be appreciated as a great convenience, and we’d love to see more companies follow this fine example.

Thanks to [irox] for the tip!

hackaday.com/2026/04/29/noctua…

-

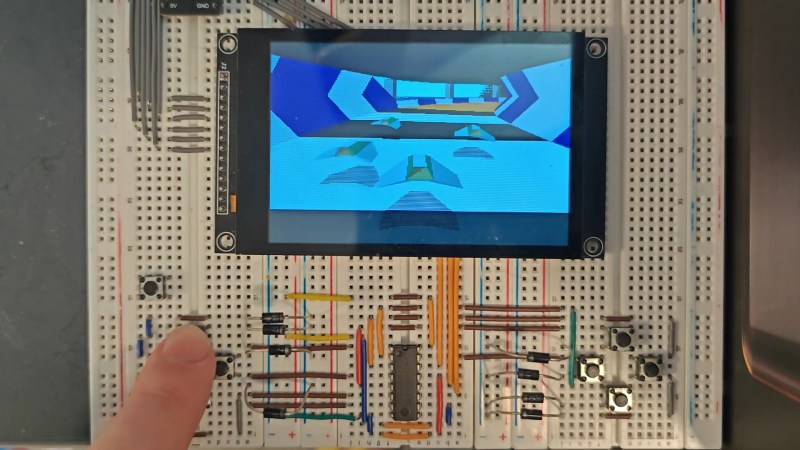

Wipeout Clone Runs Native on ESP32-S3

MondoWipeout Clone Runs Native on ESP32-S3

Psygnosis’s 1995 game Wipeout is remembered for two things: being one of the greatest games of all time, and taking advantage of the then-new PlayStation’s capacity for 3D graphics. The ESP32-S3 might not be your first choice to replace Sony’s iconic console, but [Michael Biggins] a.k.a. [PhonicUK] is working on doing just that, with his own clone of Wipeout on the Expressif MCU.

It’s actually not that crazy when you think about it. The PlayStation had a 32-bit RISC processor, and the ESP32-S3 is a 32-bit RISC processor. The PlayStation’s was only good for about 30 Million Instructions Per Second (MIPS) but it had a graphics co-processor to help out with the polygons — the ESP32-S3 has two cores that can help each other, which combine to about 300 MIPS. In terms of RAM, the board in use has 8 MB of PSRAM, while the faster 512 kB on the chip is used, in effect, as video ram.

The demo is very impressive, especially considering he’s fit in three computer players. He’s also got it blasting out 60 frames per second, which is probably double what the original Wipeout ran on the PS1. Part of that is the two cores in action: he’s got them working together on the interlaced video output, one sending while the other finishes the second half of the frame. Each half of the video gets dedicated space in the internal memory. Using a 480×320 pixel display doesn’t hurt for speed, either. Sure, it’s paltry by modern standards, but the original Wipeout got by with even fewer pixels — and it didn’t run on a microcontroller. Granted it’s a beefy micro, but we really love how [Michael] is pushing its limits here.

Right now there’s just the Reddit thread and the demo video below. [Michael] is considering sharing the source code for his underlying 3D engine under an open license. We do hope he shares the code, as there are surely tricks in there some of us here could learn from. If it’s all old hat to you, perhaps you’d rather spend a weekend learning raytracing.

youtube.com/embed/aKkb5L-YTTc?…

hackaday.com/2026/04/29/wipeou…

-

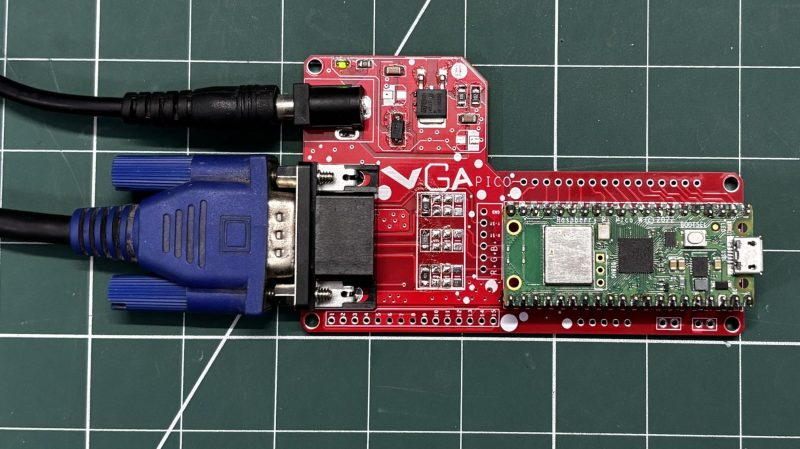

Compact VGA Output Board For The Pi Pico

MondoCompact VGA Output Board For The Pi Pico

Many microcontrollers can spit out simple analog video signals if that’s something you desire. However, it normally requires a bit of supporting hardware and, of course, the right connectors to work with your other video equipment. [Arnov Sharma] took that into account when whipping up this neat VGA board for the Raspberry Pi Pico.

VGA output in this case is achieved via judicious use of the Pi Pico’s PIO subsystem, which is perfect for clocking out the signals for red, green, and blue along with HSYNC and VSYNC as needed. The Pico slots right into [Arnov’s] custom PCB, which makes it a cinch to hook everything up. Supporting hardware is minimal, requiring just a few resistors between the Pico and the DE-15 VGA connector. There’s also a nice LM317 regulator on board to supply power to everything. [Arnov] also whipped up a modified version of the VGA library from [Pancrea85], which allows the Pico to output VGA in a way that’s more accepted by more recent TFT displays as well as older CRTs. The system is demoed with a few basic Hello, World programs, as well as a neat recreation of Conway’s Game of Life.

If you want to get a Pi Pico hooked up to a big screen quickly, whipping up a board like this is a great way to go. If you’re wanting something more advanced, though, you could always explore DVI and HDMI on the same platform. Video after the break.

youtube.com/embed/VbxdEa9tA2c?…

hackaday.com/2026/04/28/compac…

-

Recycling PLA and Other Plastic Waste with Compression Molding

MondoRecycling PLA and Other Plastic Waste with Compression Molding

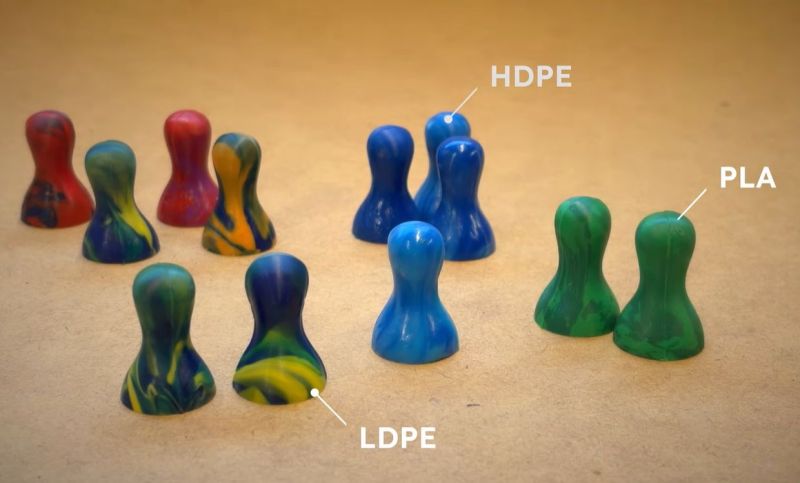

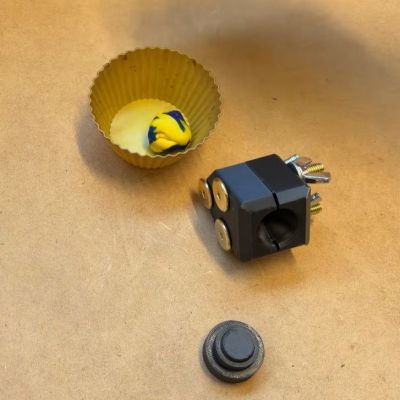

After previously trying out low-tech compression molding with a toaster oven and 3D printed molds, [future things] is back with a video that seeks to explore some of the questions raised after the first video. Questions such as how well this method works with HDPE and PLA thermoplastics, whether the flashing could be cut off by the mold and the right temperatures and times to heat the plastic before a charge is ready for inserting into the mold.

In this video the same PHA-based mold is used, but in a three-piece configuration to allow for a more complex shape. This way game tokens could be made for use by the son of the author, which also shows one straightforward and very practical use of this method.

A big change here is that no more metal chopsticks are used to handle the charge, as this was found to cool down the heated plastic too much. Instead the hot charge is handled with fingers and wooden chopsticks, with the plastic heated until it has about the consistency of thick honey. For LDPE this takes about 5-7 minutes at 130°C. After compressing the charge into the mold, about 30 seconds are all it takes for the plastic to cool down enough.

There was a question about the use of mold release spray, but this didn’t seem to cause any issues, so can probably be used safely. As for other plastic types, HDPE works fine too when you heat it up at a slightly higher temperature and don’t mind it being tougher to handle.

Easiest is probably PLA, which would seem unsurprising. Using some chopped-up PLA printing waste it was easy enough to make a few more game tokens, demonstrating that this method is very viable for converting scrap FDM print waste into such items. As noted in the comments by [edmundchao] this method works great too for PETG, using PETG molds, while using a ratcheting clamp for extra pressure instead of just pressing by hand.

youtube.com/embed/R9bohPNgblk?…

hackaday.com/2026/04/28/recycl…